In early 2020 Art Processors began creating five digital interactive exhibits for the Museum’s new Life in Australia gallery, opening in mid-2021.

One of the exhibits is a playful and educational window onto one of Australia’s most celebrated creatures: the platypus.

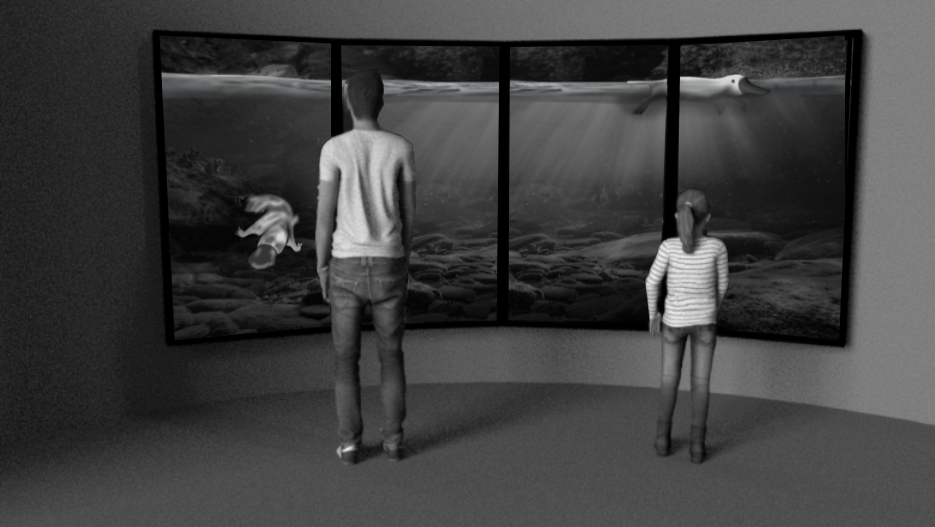

As nocturnal and typically shy animals who spend most of their time underwater, platypuses aren’t the kind of creature you can easily get to know. That’s why we’re working on a 3D aquarium-like display that will allow you to not only view the platypus up close, but actually interact with it and its habitat.

Instead of touching the screen, you'll be able to use your own body movements and gestures – picked up using state-of-the-art motion-tracking technology – to interact with and control the 3D-animated platypus.

Through your movements, you can also unlock special features hidden in the underwater environment on-screen.

Let’s go behind the scenes and see how all this is being brought to life.

Development during lockdown

When designing an exhibit, thinking about the physical space is very important. To properly understand a space, we often build full-scale mock-ups, or simulate the space using projectors or screens.

But when Melbourne went into lockdown at the onset of the Covid-19 pandemic in March, we had to flip our usual approach. Suddenly we had a new problem to solve: how do we develop a motion-controlled experience for the Museum in Canberra while working from home in Melbourne with no access to our studio?

Starting with what we know

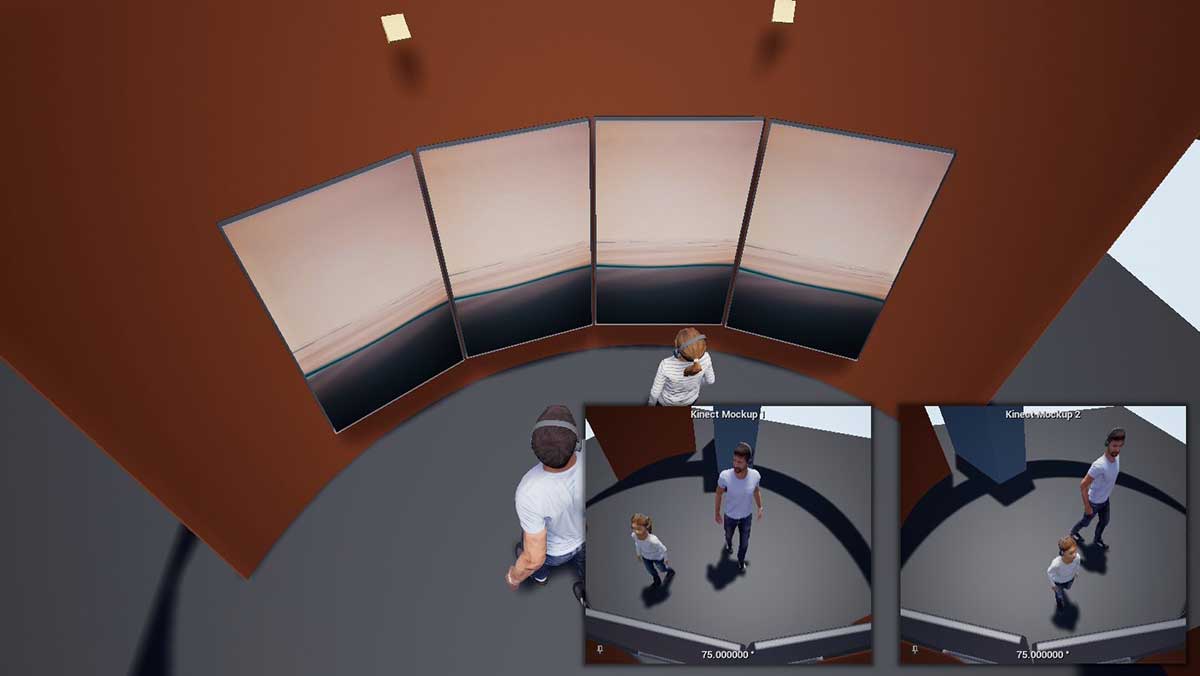

When developing a motion-controlled experience we often start by creating a simple 3D representation of the space – a virtual mock-up. This helps us understand how many people might be able to use the experience at the same time, and how well the motion-control cameras will work in the space.

Screens within screens

Once we’ve created a virtual mock-up, we then usually create a physical mock-up.

We had planned to create a curved wall in our Melbourne studio using basic materials and a large projector. But with new restrictions in place, we needed to find a way to achieve the same results from home.

So we asked ourselves: can we use our virtual mock-up and modify it to act more like a physical mock-up, showing what the platypus experience would look like on the four screens and how someone could interact with it?

To do this, the first thing we used was a prototype that we had previously made to test water interaction. Instead of showing it directly on a monitor, we showed it inside our virtual mock-up. We called this our interactive mock-up.

In this video you can see this idea in action.

This video has no sound

Let’s quickly break down what we’re looking at in this video:

- This is the interactive mock-up of the space with the live screens showing a prototype to test water interaction.

- The output from the water prototype.

- A webcam view of us testing the water interaction.

- The motion-tracking software that follows our movements.

Bringing back depth through VR

Creating an interactive mock-up of the exhibit helped us see how it could look on the four screens. But working in a 2D digital environment took away the sense of depth, and made it harder for us to test how people might interact with the experience.

So, we began working with virtual reality (VR). Using VR we could interact with a version of the mock-up at the correct size, helping us get a feel for the scale of the experience in relation to the person using it.

In the video below you can see the VR version of the mock-up. Notice that the layout is the same as the 2D version, but the background now shows what the person is seeing in VR.

This video has no sound

Final production

Our next hurdle will be figuring out the best way to collaborate remotely on more detailed design and development decisions as we move into production.

Despite the challenges of the ‘new normal’ we’ve found ourselves in, this approach has proved a hugely effective way to collaborate remotely. We’re excited to see how far we can take it.